Yes, though not by as much as one might think. In the real world, you give up about 1/√2 in resolution when measuring test shots of a black and white resolution chart using a Bayer masked sensor combined with a top notch demosaicing algorithm when compared to using a monochrome sensor with no Color Filter Array (CFA) under the same conditions with the same high performing lens.

But you also give up a LOT of flexibility in controlling the tonal values of differently colored objects.

When shooting with an unmasked monochrome sensor, the tonal relationships between objects of different colors are locked in as soon as the sensor is exposed. Any filtering to make similarly bright objects of one color a brighter shade of grey than equally bright objects of another color must be done using a physical filter at the time of exposure. The monochrome sensor only records a shade of grey. The raw files can no longer differentiate between objects that were different colors.

If we use a red filter when we shoot in monochrome without a Bayer mask, we can't go back based only on the information contained in the raw data and make it look like we used a green filter, or even an orange filter after the fact. With a digital raw file from a Bayer masked sensor, the possibilities of adjusting relative tonal values based on the colors of objects in the scene are near endless!

If your ultimate goal is to get the highest resolution possible of flat B&W test charts, then the monochromatic sensor is the clear winner.

If, on the other hand, your goal is to get the best possible results shooting in challenging lighting situations then the choice is not so clear cut.

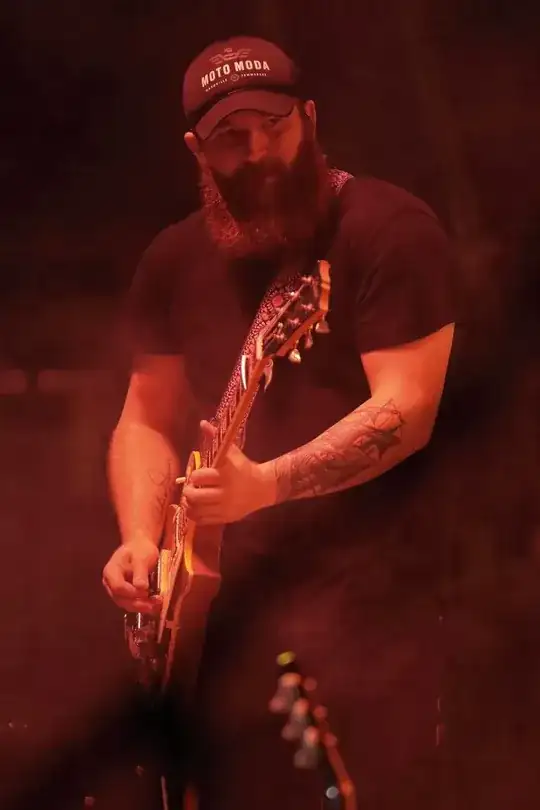

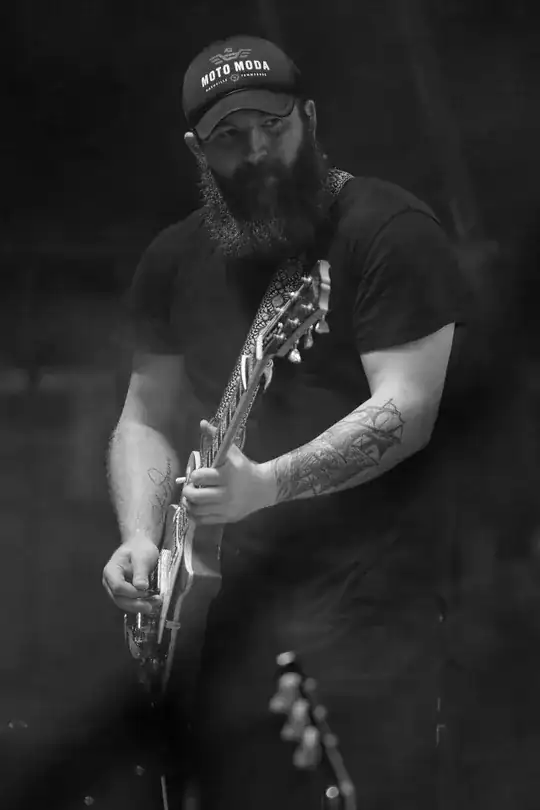

Consider this shot, which is basically how the scene appeared to my eyes when I shot it on an outdoor festival stage at night.

EOS 7D Mark II + EF 70-200mm f/2.8 L IS II, ISO 3200, f/2.8, 1/500 second.

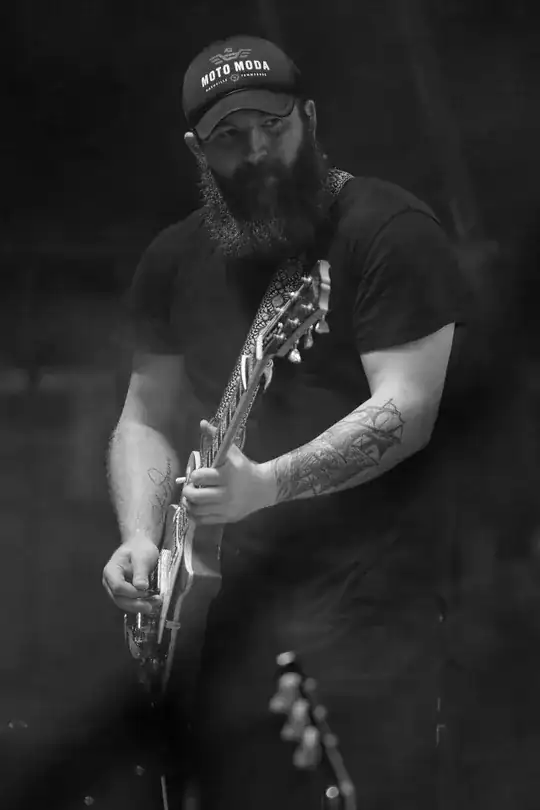

Converted to monochrome without using any synthetic color filters after the fact would give a result something like this:

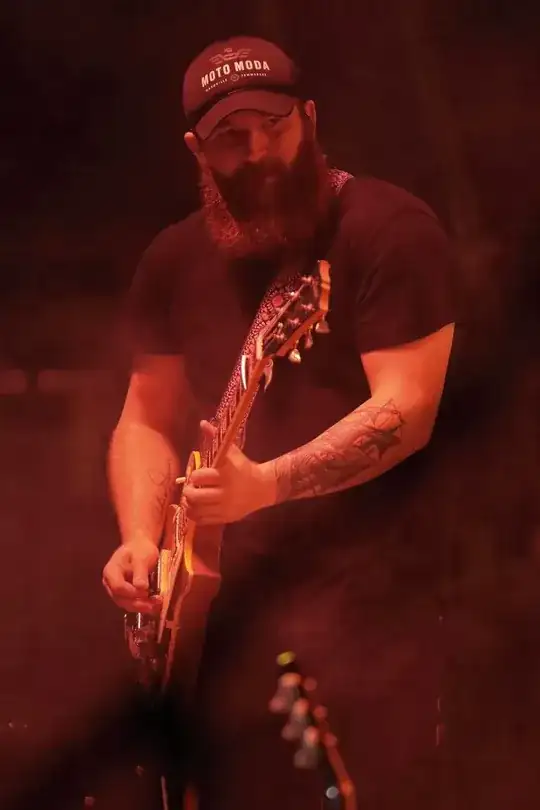

Applying a green filter after the fact to increase contrast under red light and reduce the diffusion caused by the on stage "fog" illuminated by the red light gave me this:

Putting a green filter in front of the lens using a monochrome sensor would have given slightly more theoretical resolution, but the blurring from shooting handheld in low light of a subject in motion while standing on a temporary stage vibrating with the music being played at high volume levels would have probably obliterated that tiny difference. Plus, if I still had that green filter on the lens a few seconds later when the color of the stage lights changed to predominately blue - or green, or white or orange - I'd have been scrambling to remove the filter and put on an orange, red, or blue one before shooting the next photo. By which time the light would have changed colors again...

For a more complete technical explanation of how Bayer CFAs affect resolution and quantum efficiency compared to monochrome without a CFA, please see this answer to How does shooting on dedicated monochrome digital cameras compare to shooting in monochrome mode on full-colour digital cameras?

In the end, it depends upon what tradeoffs are more important to you. How these considerations are weighted will vary based on what one is shooting and the pace at which it may be shot. A fast-paced environment with rapidly changing lighting conditions would weight things one way. A more methodical shooting situation in which a plethora of physical color filters are available and can be swapped out without losing the shot because the scene has changed in the interim would tend to be weighted the other way. My work lives more in the former situation, but yours might be in the latter.