I am a graduate student in math. I am coming up on the end of my masters, and multiple classes require LaTeX submissions. These classes are all very difficult by default, and spending multiple hours doing LaTeX only to submit an incomplete homework assignment feels non-constructive to me. I am getting better at LaTeX efficiency, however, I have found options like https://mathpix.com/ which will let me upload PDFs of my handwritten work, and then their AI will output LaTeX code which I can copy to overleaf and tweak for accuracy and formatting. Is this act that I have described plagiarism, or some other (commonly) punishable academic offense?

-

70Could this be the most brilliant guerrilla marketing campaign ever? ;) – Ian Feb 08 '24 at 23:55

-

7Just a lowly student swimming in a sea of Tex. – SBJ Feb 09 '24 at 00:02

-

Does this tool work for handwritten text? I would assume that they usually take typed text in pdf format and convert back to LaTeX. – quarague Feb 09 '24 at 08:37

-

1I would say no. Eventually, your ideas are put to paper. Cite where appropriate and you will be fine. Reducing the tedium of TeXing is ok. – AlvinL Feb 09 '24 at 09:44

-

23You tagged this with [tag:generative-ai], but AFAICT, the tool this is about is not generative AI, which is also crucial with respect to plagiarism issues. – Wrzlprmft Feb 09 '24 at 11:20

-

2@Wrzlprmft I don't know in this specific case, but I think generative AI could reasonably be used in such a tool. It's not just about being able to match squiggly lines to LaTeX commands, the software would work much better if it incorporated a LLM trained on math formulas. For example some symbols might be impossible to read correctly unless you can guess which field the formula is from and being able to predict likely following symbols giving a prefix of a formula would also be immensely useful. – Nobody Feb 09 '24 at 12:11

-

@Wrzlprmft I.e. the software would be solving the same problem, whether it tries to guess what you intended to write, or if it corrects small mistakes. At graduate level I think this should be fine and not cheating, but might still be against the rules. – Nobody Feb 09 '24 at 12:12

-

1@Nobody: I think generative AI could reasonably be used in such a tool – Sure, but it can also lead to huge ethical, legal, or quality problems. The designers of the tool may even have explicitly avoided generative AI on accounts of questions like these. Anyway, my main point is that this is a crucial question to clarify. – Wrzlprmft Feb 09 '24 at 12:20

-

1But Latex is just a formatting tool. It does not generate ideas. How is it plagarism? – stackoverblown Feb 09 '24 at 12:34

-

2If you are struggling to write quickly in LaTeX, maybe you should try out Typst. I felt a noticeable jump in my productivity when I switched to Typst. It also has a free local compiler available here. – mhdadk Feb 09 '24 at 13:57

-

1Why are these classes requiring you to use LaTeX? Just so you can submit pdfs, or so that you learn LaTeX? – Kimball Feb 09 '24 at 17:08

-

@Nobody: That wouldn't be a generative AI though. It would use a lot of the same large language model technology underpinning generative AI, but in the end it's doing maximum-likelihood decoding of the input. – Ben Voigt Feb 09 '24 at 17:41

-

1Seems like I didn't have a great grasp of what "plagiarism" really meant. It still felt like an ethical gray-area, thank you all for clearing up some of my misconceptions. – SBJ Feb 09 '24 at 20:27

-

If in doubt, ask your supervisor. – user253751 Feb 10 '24 at 02:04

-

2Is it plagiarism using a Fortran (or C, etc) compiler to translate your Fortran programs to machine code? – eigengrau Feb 10 '24 at 06:42

-

@BenVoigt I agree, but for plagiarism/cheating purposes it might still be a problem. – Nobody Feb 10 '24 at 15:54

-

This website is way too obsessed with "AI". – Brady Gilg Feb 11 '24 at 19:25

14 Answers

Overall, no. The tool you use to write something hasn't got the same status as another author. I think it definitely would be treated differently if it was "found out".

One thing, be careful that any text generated isn't accidentally lifted from another document (I think this is unlikely), that can be plagiarism. So just use AI to help generate it, then edit it, check it, and its fine. I think on the whole that's a fair way to look at this issue.

- 651

- 5

- 15

-

I only intend to take generate Tex from my own handwritten work, so that shouldn't be an issue. – SBJ Feb 09 '24 at 00:08

-

27@SBJ I think the concern here was that the AI might have been trained on other people's work, so even if you only show it your work, it might introduce traces of other people's works in its output. As an extreme example, imagine you write an equation that is somewhat similar with an equation in someone else's work that the AI was trained on; it's conceivable that the AI might put that other equation, instead of yours, in its output. But you have to check the output for mistakes anyway, so everything should be fine. – Stef Feb 09 '24 at 13:17

-

3@SBJ As another extreme example, imagine you write a complicated handwritten equation; there is a straightforward-but-complicated way to write this equation in latex, and a very-creative way. If the AI has been trained on someone else's work, the AI might use the very-creative way, and that could be considered plagiarism even if the resulting equation is the same (but the resulting latex code is not the same). But that's a somewhat extreme example, and writing latex code is not an extremely creative endeavour, so it's fine. – Stef Feb 09 '24 at 13:20

-

6@Stef Assuming the work is being graded on the mathematics as conveyed in the PDF and not on the LaTeX coding itself, I don't think even that situation would count as plagiarism. (I certainly wouldn't count it as plagiarism in my classes.) What you're suggesting feels to me like it's getting close to saying the authors of every piece of software you use should be credited as coauthors of your work. – Mark Meckes Feb 09 '24 at 14:53

-

(Which by the way I acknowledge is not always a cut-and-dry question, as evidenced by other questions on this site.) – Mark Meckes Feb 09 '24 at 14:53

-

@MarkMeckes My point is not about the software, it's about the training data used while training large machine learning models. Imagine if I ask an LLM to translate a sentence from another language into English, and the English sentence outputted by the LLM turns out to be a sentence taken straight from a Harry Potter book. Not only is this plagiarism if I claim that I wrote it, but it's also copyright infringement regardless of my claims. These things do happen regularly to people who use large language models or image-generation models. – Stef Feb 09 '24 at 14:59

-

@MarkMeckes And the issue is not just for literature and drawing; this has happened repeatedly to programmers who used code-generation models that were trained using code from github and other code repositories. Of course it's harder to recognise programming code than to recognise Harry Potter prose, and it's harder to recognise short LaTeX code than long programming code, and writing LaTeX is a lot less creative endeavour than writing novels, so it's less of an issue for LaTeX than for literature, but still the issue does exist. – Stef Feb 09 '24 at 15:02

-

12@Stef Yes, I get your point. The difference here is that (I assume) the student isn't turning in the LaTeX code, just the compiled PDF. If they are turning in the LaTeX code itself, and especially if they're being graded on the LaTeX coding, then I agree with you completely. – Mark Meckes Feb 09 '24 at 15:11

-

Another issue is that part of the goal of these assignments may be to is to build proficiency in LaTeX. So--check with instructors. – Kimball Feb 09 '24 at 17:09

A very interesting issue! Although I do pay a bit of attention to various tech advances, I was not aware that there was any mechanism/app/device that could convert handwritten to TeX.

If any of my math grad students told me that they were using such a thing, I'd probably congratulate them for being ahead of the curve. :) But/and also advise them to double-check! :)

But, yes, many situations would declare that this was not ok... So, you have to ask in advance. I don't think it's "plagiarism" per se, but just maybe "against the rules".

- 88,477

- 10

- 180

- 343

-

12Also, be sure to acknowledge "spell-check" in your bibliography. :) – paul garrett Feb 08 '24 at 23:58

-

1

-

33Would they? This is just OCR but outputting TeX instead of Unicode. As long as the output matches the input and isn't "corrected" along the way, I see no difference between this and OCR, which I'd be surprised if it were considered bad. – David A. Craven Feb 09 '24 at 00:27

-

4MathPix is new to me, but Maple has a cell phone app that can read my handwriting and convert it to nice looking math---I was introduced to that at the JMMs a few years back. Though this MathPix thing looks like a major improvement, as it seems to be intended to handle things at a more document level. – Xander Henderson Feb 09 '24 at 00:28

-

2

many situations would declare that this was not ok- I'm curious about your point. OP is writing their own equations, only that instead of a keyboard, they are using paper, pencil, camera and a bunch of processors to eventually put their own thoughts into Latex. Why would this be problematic? Mathpix is practically an advanced OCR, not a generative language model building on millions of previous thoughts. The only things it knows are reading formulas and writing TeX. Unlike LLMs, the output contains exactly the same info as it's input, only in a different format. – Neinstein Feb 09 '24 at 08:26 -

@Neinstein If this uses an LLM for the recognition that it needed a lot of other peoples thoughts to learn that this elongated S squiggle in your handwriting should be transformed into \int but I agree that this should be ok and that there shouldn't be any plagiarism here with any sensible definition of plagiarism. – quarague Feb 09 '24 at 08:41

-

Not to nitpick but that would be just plain old AI image recognition, not LLM. The problem with LLMs is that they spit out other's thoughts, curated and tailored, and by using those thoughts you may plagiarise the sources the LLM used. I don't see this happening with an IR model, since the only information it spits out is that "many other people write "S" similarly to this squiggle character you drew, so it's probably an "S"." This info is unrelated to the content going into the paper. That's why I don't get the point, neither in the question nor in the answer. – Neinstein Feb 09 '24 at 13:12

-

1@Neinstein Classifying one squiggly handwritten symbol as being one latex symbol is just image recognition, but converting a complicated handwritten equation into complicated latex code is more involved than that. Compare "looking up one french word in a French-English dictionary" and "converting a French sentence into an English sentence". I agree that writing latex code is a lot less creative than writing full English sentences, but still, I think MathPix is doing much more than just converting each symbol, and it's not obvious where to draw the line between "creative" and "not creative". – Stef Feb 09 '24 at 13:44

-

1@Stef As long it is only typesetting and typesetting is not part of the learning outcomes of the course, it really does not matter. I frankly would rather not have to worry about the positioning of the kerning, whether to use \stackrel or \substack or \overbox, how to make my commutative diagram look good with annotated morphisms or make sure that the 3D lines of the Hamming cubes properly shadow the lines behind them in the visual cone. This is work that might have been outsourced to some assistant in the past and adds nothing to the math. – Captain Emacs Feb 09 '24 at 14:31

-

-

@paulgarrett I am sure I do when I read, and I am sure I care that the TeX package does, but I do not wish to become a typesetting expert, at least not forcedly so ;-) – Captain Emacs Feb 11 '24 at 00:57

-

@Neinstein, sorry to respond so belatedly: people (in control) do often make unreasonable rules, and do not allow negotiation! Who knew? :) :) In the 1960's, there were questions about whether slide rules were allowed... because kids who knew how to use them would have an ... unfair?!? ... advantage. :) – paul garrett Feb 11 '24 at 20:44

-

@quarague "If this uses an LLM for the recognition [then] it needed a lot of other peoples thoughts to learn that..." That's how all OCR (and machine translation, and speech recognition, and...) works. This is just OCR followed by translation to LaTeX. – Ray Feb 12 '24 at 16:32

Copying the output of a Large Language Model might be cheating, depending on the rules of the game you're playing, but unless the LLM itself is reproducing the work of a human author, then copying the output of the LLM cannot be plagiarism by any usual definition of the word (see final note, below). Plagiarism requires a human on both branches of the plagiariser/plagiarised fork.

If you are being tested or examined on your ability to write LaTeX, then having a machine produce the LaTeX for you would be considered cheating in the same way that the use of a calculator would be looked upon as cheating if you were being tested on your ability with mental arithmetic. It doesn't sound as if that is an issue in your case.

I have experimented quite a lot with using an LLM to produce LaTeX code, either from my handwriting or from a scanned (printed) document that has been poorly typeset. Here are two examples from handwritten material. The "mathematics" is of course complete junk but the results are impressively useful even if not completely correct.

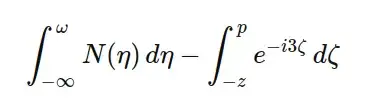

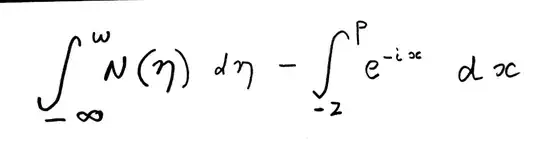

Example 1

In this first example, I am interested in whether the LLM can get the basic structure of the equations correct.

This is the LaTeX code that the LLM produced.

This is the LaTeX code that the LLM produced.

\[ \int_{-\infty}^{\omega} N(\eta) \, d\eta - \int_{-z}^{p} e^{-i3\zeta} \, d\zeta \]

which is set as

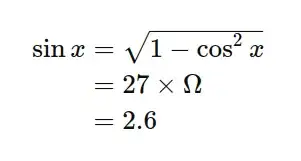

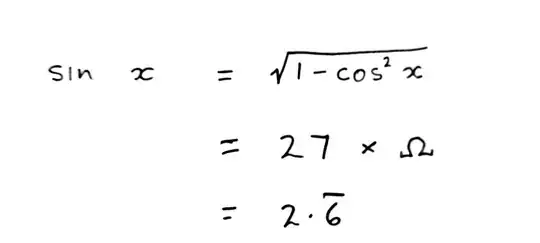

Example 2

In the second example I was interested in whether the LLM could correctly align the equations and also how much of the details (e.g., the bar over one of the digits) would be correctly produced.

This was the LaTeX code ...

This was the LaTeX code ...

\begin{align*} \sin x &= \sqrt{1 - \cos^2 x} \\ &= 27 \times \Omega \\ &= 2.6 \end{align*}

which is set as

As you can see, the results aren't perfect but they are nonetheless very useful.

My advice? Check the rules of the game. Are you being tested on your ability to do mathematics, or on your ability to typeset mathematics? If it is the former, and not the latter, then go ahead and use the LLM. It will potentially save you an immense amount of time (initially) and you will very quickly become familiar with the idiom of LaTeX so that:

- You no longer need to rely on the LLM to the same degree but will be able to type your LaTeX directly, and

- You will be able to give more nuanced instructions to your LLM so that it can set the mathematics in exactly the way that you want.

Note: ... from the Cambridge Dictionary of English. "plagiarism: the process or practice of using another person's ideas or work and pretending that it is your own"

- 11,150

- 1

- 28

- 64

-

8Minor technical point: mathpix.com does not appear to use an LLM, but a different AI/ML technique that does image/handwriting recognition. – James_pic Feb 09 '24 at 11:09

-

2LLM, like pretty much all machine learning techniques, rely on training data. In most cases, this training data was created by humans, so it can very much be plagiarism if you try to pass the LLM's output as your own, and the LLM's output has recognisable traces of someone else's work. – Stef Feb 09 '24 at 13:37

-

10I'm sorry but your point about plagiarism is just wrong, and not supported by various other dictionaries and sources (1, 2, 3,4). Plagiarizing is the act of presenting work you did not do, work that you had no hand in creating, as though it were yours. Arguing that you cannot plagiarize a machine is missing the entire point of why plagiarism is wrong. – terdon Feb 09 '24 at 14:21

-

2The links I gave were the result of just a few seconds search. Just because the author of one dictionary used the unfortunate term "person" doesn't mean that you require the author to be a human being. All it takes to plagiarize is to pretend you produced work that was produced by someone other than you. – terdon Feb 09 '24 at 14:22

-

3Agree with @terdon, the definition of plagiarism here seems too narrow. Suppose you copy an essay off the internet and submit it as your own work. I have a hard time accepting that it is impossible to tell whether that wholesale copying of text and false representation of it as your own is plagiarism or not, unless we also know for certain whether the original text came from a person or LLM. In an age where LLMs can pass the Turing Test, it's not even possible to tell whether something was written by a person or machine, meaning "I copied an LLM, not a person" is always an available defense. – Nuclear Hoagie Feb 09 '24 at 15:46

-

1@NuclearHoagie: Normal LLMs are trained on the writings of real humans, so you're still indirectly copying humans. The legalities are the subject of various court cases. This is all beside the point, of course: as terdon says, what matters is presenting something as your own creative work when it's not. e.g. if I claimed to have written some assembly-language code but actually copied the output of an optimizing compiler, like GCC, Clang, or MSVC. Or a more obscure one so experienced humans might not recognize its tendencies... Current compilers are not AI at all, just human-built machinery – Peter Cordes Feb 09 '24 at 16:06

-

1When you say "experimented quite a lot", were your illustrated experiments produced using https://mathpix.com/ like the op, or were you using some other LLMs/tools/whatever? If not mathpix, could you elaborate what tools you used to go from scanned pdfs of handwritten pages to LaTeX source? Thanks. – eigengrau Feb 10 '24 at 07:11

-

@terdon ... "I'm sorry but your point about plagiarism is just wrong". You shouldn't feel you have to apologize for expressing a contrary view; it's OK. – CrimsonDark Feb 10 '24 at 11:38

-

1@eigengrau I have experimented a lot with ChatGPT and it was to ChatGPT that I uploaded the specific illustrations I used in my answer. I have also uploaded pages from several old printed works that were so dense and badly typeset that I found them impossible to read. By having ChatGPT scan them and produce the LaTeX code, I have been able to get the math double-space instead of compressed-single space ... and finally read the papers. I've jotted long notes in a notebook, and then uploaded those handwritten notes, and had them set beautifully. I have to write a bit more neatly than usual! – CrimsonDark Feb 10 '24 at 11:43

-

@CrimsonDark Thanks a lot for the detailed info. I have lots of handwritten notes, with lots of math, that I've already scanned as pdf pages ("one small step for a man"...). The further possibility of having them automatically LaTeX'ed hadn't even occurred to me (..."one giant leap for mankind"). I'd of thought I'd get to walk on the Moon first. Can't wait to give it a try. Thanks, again, Crimson. – eigengrau Feb 10 '24 at 16:41

-

1@eigengrau I've found this instruction to ChatGPT to be a good starter (it sometimes doesn't start at the beginning, nor end at the end! ... "The attached image shows a mathematics text. The text begins with the words "Let $x$ be an element" and ends with the words "integrate over the". Please show me the LaTeX code to typeset the document correctly. Ensure that you begin at the beginning and end at the end. DO NOT start your LaTeX code with

\documentclass; just start with\begin{document}" ... and I repeat that instruction with every page! If it goes wrong, I start a new chat. – CrimsonDark Feb 11 '24 at 03:27 -

1@eigengrau ... one page at a time. I usually upload images not PDFs. With old works, I extract the images (on a Linux machine) with

pdfimages. JPEGs, not PNGs. PNGs blow ChatGPTs mind. – CrimsonDark Feb 11 '24 at 03:30 -

1The “Cambridge Dictionary” definition just didn’t realise that “work” might be created by someone or something not a person. If an “AI” had a high degree of real intelligence to create a work on its own, that could lead to plagiarism. – gnasher729 Feb 11 '24 at 08:36

-

1@CrimsonDark Thanks, again. I tried using my free chatgpt-3 account (uploading an image to the web, and pointing chatgpt to it), but it failed miserably. It typeset just a few text words, and none of the $math$, and none of the drawings. I tried both with a gif (first using convert .pdf --> .gif) and with the scanned pdf. Then I pointed it to your answer here https://academia.stackexchange.com/questions/206563#206569 and asked if chatgpt-4 is required. It said it didn't know. Are you using chatgpt-4? I didn't want to pay for it until I'm reasonably sure it will work on my more elaborate stuff. – eigengrau Feb 11 '24 at 13:40

-

@eigengrau Yes, I am using ChatGPT-4. Try for a month, no harm ... no guarantees. It's taken a while to get the hang of it. And ChatGPT3 ... completely useless, for almost everything. (no, I am not working for OpenAI :) ! – CrimsonDark Feb 12 '24 at 11:50

Here is a good rule of thumb to understand what constitutes plagiarism.

Plagiarism is - in spirit - about claiming intellectual credit for yourself that you did not do, but copied from someone (or had someone do for you).

[That's why self-plagiarism is called "plagiarism", although it's a bit controversial naming. The use of that latter term focuses not so much on the aspect of stealing credit from others (which regular plagiarism also implies), but on claiming credit that you don't deserve (because you already received it earlier).]

Back to your question: Under above interpretation, as long as the AI does not carry out any intellectual work that you are supposed to do, you should be fine.

For instance, if the course involves actually learning to use LaTeX, it would be plagiarism. If the course only involves somehow typesetting the material in whatever way is suitable, you could ask the tutor if it is legitimate to use an AI helper for that purpose and to cite it if relevant.

- 47,698

- 12

- 112

- 165

-

2"Plagiarism is - in spirit - about claiming intellectual credit for yourself that you did not do, but copied from someone (or had someone do for you)." And looked at this way, the problem is completely solved by being fully honest and open about what you've done: that's why citations and quotation marks help. In this case, OP is probably asked to fill in some sort of (digital) form at the time of submitting the work, and will have an opportunity on that form to declare this as "use of an automated translation tool" or similar. – Daniel Hatton Feb 09 '24 at 13:40

-

1@DanielHatton Indeed. Though, nobody will put quotes around all words they looked up in the dictionary or which was fixed by Grammarly. With the increasing power of tools, the boundaries where external work needs to be acknowledged will become increasingly blurry. Had your human assistant typeset your math, you would probably acknowledge and thank them for typesetting, but not cite them, or mention them as co-author. – Captain Emacs Feb 09 '24 at 15:08

-

Self-plagiarism isn't called "plagiarism", it's called "self-plagiarism". That doesn't mean it's plagiarism any more than calling something a "sweet potato" means it's a potato. Of course, I'm not disputing that both of the former are misconduct, or that both of the latter are vegetables. – Especially Lime Feb 09 '24 at 15:21

-

1@EspeciallyLime I used also to fall for that trap, but then I understood where it comes from. The word plagiarism has two aspects and they are linked, but not equivalent: 1. "taking undeserved credit from others", 2. "claiming undeserved credit for oneself". Self-plagiarism gets the "-plagiarism" epithet not because of 1., but because of 2. Of course, that implies that credit is a quantity to be "counted", but in a publication-beancounting society, that's pretty much is given. – Captain Emacs Feb 09 '24 at 15:58

-

@CaptainEmacs does that second definition come from an actual dictionary? I couldn't find anything similar. – Especially Lime Feb 09 '24 at 16:05

-

1@EspeciallyLime Not that I know of, but I kept wondering why the term self-plagiarism took hold and is so persistently used. That's the explanation that I came up with to explain it to myself. Feel free to disagree, to me that made it finally make sense. – Captain Emacs Feb 09 '24 at 16:31

-

@CaptainEmacs I mean... the practice needs a name, because it's a dangerous enough practice to need to be openly discussed. (Imagine publishing the same empirical dataset twice, and leaving the community thinking there are two independent datasets that say the same thing, which is a very different epistemic situation from just having one.) Once we realize the practice needs a name, "self-plagiarism" seems as good a name for it as any. – Daniel Hatton Feb 11 '24 at 12:29

-

@DanielHatton There are different issues here. One is multiple use of the same data set. If disguised, it's as bad as data manipulation/faking. The other one is having multiple publications from the same author which copy each other or are very similar. I must say that I find the latter only a hard problem for hiring committees who count papers. There are authors who tend to repeat the same point over and over, in different words, and nobody considers that self-plagiarism. Maybe they have only one thought. Maybe there are all these different facets of the one thought. – Captain Emacs Feb 11 '24 at 12:51

-

1@DanielHatton Apart from that, I did not criticise the word self-plagiarism, but I saw many people criticize the choice of word. As a consequence, I wondered, as I said, why people used it. And this was the explanation I came up with for myself. – Captain Emacs Feb 11 '24 at 12:53

-

self-plagiarism is an oxymoron as plagiarism is the practice of taking someone else's work or ideas and passing them off as one's own. – deep64blue Feb 11 '24 at 20:53

-

@deep64blue This is precisely what I was wondering about. If you interpret plagiarism as having two different aspects to its meaning, 1. taking credit away from someone else vs. 2. claiming undeserved credit, however, under interpretation 2, the name self-plagiarism makes sense again. It is clear that originally meaning 1 was the dominant one, but words exhibit semantic creep, so, without being a language expert, I can imagine that something like that may have taken place. Maybe someone with scientific linguistic expertise can clarify. – Captain Emacs Feb 12 '24 at 01:37

-

Even sense 2 doesn't fit as you're not claiming undeserved credit when it's your own work! – deep64blue Feb 12 '24 at 12:58

-

@deep64blue If you read my comments again, you will notice that I refer to committees counting papers. Having two papers saying the same thing, in US-style evaluation scenarios, is essentially claiming duplicate credit. I personally do not agree with this style of evaluation, but this is the norm in many locations. This is why CVs have separate Journal sections, because they split out similar earlier conference papers. I tried to understand the origin of the term 'self-plagiarism' and why it's frowned upon. If you have a better idea why it's considered harmful, let me know. – Captain Emacs Feb 12 '24 at 16:37

As many answers have already pointed out, this would not be plagiarism. However, you should be aware that by using an AI, you turn over your data to them- that is, future users might plagiarise you. Using the example provider that you linked, here is their privacy FAQ (emphasis mine):

New and different images allow Mathpix to improve current recognition features and add new recognition features. If you allow Mathpix to use your images, anonymized versions of your images may be chosen for algorithm training purposes by our QA team. Regardless of if an image is selected by QA or not, all images in the QA database are permanently deleted after 60 days.

So while your use might not constitute plagiarism, others might plagiarise using the future trained model of this AI. If it's just homework it is probably okay, but a Master's thesis could already be something worth pursuing in a PhD.

In this case, the provider suggests that you can opt out. This would be something to always check when you use an AI provider, and proceed with caution.

- 1,127

- 6

- 14

Ask the person teaching the course

Only they know whether the (secondary) goal of the course is to teach you LaTeX skills or not. If it is, using an unapproved tool might circumvent that didactic goal. If it isn't, it's still up to the teacher to decide which tools are ok to use for their course and which aren't.

And don't ask about plagiarism. That's not the issue here. Ask whether you are allowed to use that tool for your coursework.

Since these AI tools are fairly new, there is no "established consensus" on this issue, so be prepared for answers ranging from "Absolutely not!" to "Sure, why would you even ask?" to "Uh, no idea, I need some time to think about that"¹.

¹ Next week on academa.SE: "Should I allow my student to use an AI tool for LaTeX markup?"

- 1,597

- 11

- 13

-

1Oh shoot, I wish I would have seen your comment sooner. I asked specifically about plagiarism this morning. All of them seemed to echo "that's not an issue here". – SBJ Feb 09 '24 at 20:23

I'm going to go against the consensus here and argue that what you are doing is self-defeating, whether or not it is technically plagiarism.

I'm sure your lecturers aren't asking for submissions in LaTeX in order to torture you. It's possible they are doing it for their own convenience (because well-written LaTeX is much easier to read than handwriting); but it seems much more likely that training you to use LaTeX is part of the course goals – LaTeX is, like it or not, the "industry standard" for typesetting mathematical documents and so being able to write in LaTeX is a necessary skill for a mathematician. If so, then using a fancy OCR program to generate TeX from handwritten math is cheating.

It's really not that hard to pick up; with a little practice, you can get fluent enough that you can even take notes in lectures directly into LaTeX in real time ("live-TeXing"). Unless you have a disability which prevents you from using a normal keyboard (or something of that sort), then I suspect that in the time it's taken you to find this handwriting-recognition website, learn to use it, then fret about whether it's allowed or not, you could have sharpened your LaTeX skills to the point where you don't need to cheat any more.

- 1,156

- 8

- 11

-

4I agree with your main point (+1), but actually from the examples in this thread the LaTeX it generates is better than a lot of the code I see from human authors. So playing around with this tool and looking at the code it produces might be an efficient way to learn LaTeX. – Especially Lime Feb 09 '24 at 11:40

-

1

LaTeX is, like it or not, the "industry standard" for typesetting math. Well that depends what industry you work in. If you’re an academic whose business is writing papers in math or physics or comp sci, then I’d agree. If you’re writing mathematical documents in industry, or you’re a high school math teacher, or you’re an academic in engineering or economics, then (like it or not) MS Word and Google Docs are more widely used.

– bubba Feb 09 '24 at 12:45 -

1Regarding "being able to write in LaTeX is a necessary skill for a mathematician", that may be true currently, but it's unlikely to be the case for long given that there are already tools that do a really good job of generating the LaTeX. I would argue there's nothing self-defeating about not learning a soon to be redundant skill. – cigien Feb 09 '24 at 12:53

-

2If you are a whiz in LaTeX, probably you will keep honing this skill. However, if you want to write math instead of worrying how to move characters into the right position, then such a tool is for you. Personally, I enjoy coding a lot, but dedicated and clever LaTeX manipulation to get the typesetting right, not so much. – Captain Emacs Feb 09 '24 at 15:10

-

Thanks for your consideration. Yes, I agree with your main point. However, I have done LaTeX for multiple years and 100+ hours, and it still does take me multiple hours to code up an in-depth assignment. Consider me behind the curve or whatever you want, but it took me about 15 minutes to figure out the website, and another 24 hours to really realize how useful of a learning tool this could be for me, and the colleagues of mine who struggle with LaTeX more than I do. I talked to my professors also, and none of them considered this "cheating", as the point of each class isn't learning LaTeX. – SBJ Feb 09 '24 at 20:18

-

@cigien “I would argue there's nothing self-defeating about not learning a soon to be redundant skill” – when I first learned LaTeX people were already dismissing it as outdated and predicting its imminent demise. That was in 1998. Yet here we are a quarter of a century later discussing this question. – David Loeffler Feb 10 '24 at 08:39

-

Ah, so you think that knowing that \int is the latex code for integral or \pm for plus or minus, has a scientific value to the human knowledge? – NotaChoice Feb 12 '24 at 02:47

-

This question, like most questions of the kind "Is X plagiarism" can easily be answered by going back to the fundamental reason(s) why plagiarism is considered such a grave offense against academic integrity in the first place.

Traceability of ideas

The primary and most important reason why plagiarism is a Bad Thing™️, is the importance of traceability of ideas in academia, science, research, culture, art, music, …, and really, society in general. But specifically in academia.

Imagine, you find a flaw in someone's argument. Or, since you are a mathematician, imagine, you find a flaw in someone's proof. It's a subtle flaw that took a while to spot, so, there have already been new papers written by other authors, relying on the theorem.

Traceability guarantees that it is possible to find every paper relying on the theorem and see if the flaw affects them or not. If the author of the original paper got the idea for the proof from somewhere else, then traceability guarantees that you can find the root cause of the flaw, and find other papers that might have picked the flawed idea up from the original source.

Plagiarism is a problem because it breaks the chain of ideas, so an idea can no longer be traced backwards to its original source or forwards to all the other ideas that build on it. This means that any flaw discovered in the idea cannot be fixed: it cannot be fixed at the root, because you don't know where the root is. It often cannot be fixed by the plagiator, because, well, they "stole" the idea and there is a chance they don't actually understand how it works!

Even if there isn't a flaw in the idea, there is still a problem: say, you want to build your idea on top of someone else's idea, but there is some detail you don't quite understand. So, you contact the author and ask them about it. If that author plagiarized the idea, there is now a possibility that they don't understand the idea enough to be able to answer your question. In the unrealistic best case, they will admit to plagiarism and point you to the original source. The probably more realistic case is, they just ghost you. The worst case is, they make up something, and it is convincing enough that you don't notice right away and go off and start building your idea on top of this made up answer, potentially wasting a lot of time, resources, money, and effort, until you notice there is something wrong.

Traceability is also one of the reasons why self-plagiarism is a thing: it still "breaks the chain". If you use the same idea in two different papers without properly referencing and citing it and someone looks at one of the two papers and finds a flaw, they have no way of knowing that there is a whole other paper out there with the same flaw. Other authors may have cited only one of the two papers, so there may be whole separate chain of papers all built on the flawed idea that nobody knows about.

Recognizing contributions

The reason that is often cited as the most important or even only reason, but is actually secondary in my opinion, is that contributing good ideas should be recognized. An author, group, or organization which advances the field should get credit for this advancement. In other words, the giants whose shoulders you stand on, should be rewarded for carrying the load.

This is another, although admittedly much weaker, argument for why self-plagiarism is a thing: you get twice the recognition for one idea. However, I find this a pretty weak argument, and I can see how people don't get why self-plagiarism is a problem if the idea of plagiarism is merely presented as "stealing someone's credit". That is why, in my opinion, focusing on the traceability aspect is important.

Conclusion

I think it should be obvious when applying the two criteria above that what you are doing is not plagiarism.

However, as pointed out in other answers and comments as well, it might still be academic misconduct in other ways. In particular, if demonstrating proficiency in LaTeX is part of the course goal, then it might be considered cheating. Or, it might be considered clever use of available tools.

- 1,264

- 1

- 8

- 10

-

I really like the traceability argument and generally your description of why plagiarism is a problem. However, in the specific case of OP, the thing you consider secondary, is actually the primary, namely getting credit. This is a class, and thus the original contribution of OP is at essence here. Also self-plagiarism - I agree with your take on it where it comes to taking credit from others; however, the problem specifically for a student is getting undeserved credit (whether through work of others or through cloning of one's own work). – Captain Emacs Feb 09 '24 at 15:17

-

In the professional realm, I agree that the argument against self-plagiarism is weaker. It's about not getting credit counted multiply for oneself, but it is only a problem because it's fashionable to count papers rather than value insights. – Captain Emacs Feb 09 '24 at 15:18

This isn't really an answer to your question but too long for a comment. (Largely an elaboration on David Loeffler's answer.)

In my math graduate courses I require all of the homework to be typed. I don't require the students to use any specific software, but I encourage them to start using LaTeX if they aren't already. I would not consider the use of AI tools that you describe to be plagiarism, or any other kind of cheating in my classes.

However, there are three reasons I require the homework to be typed:

So that it is legible.

When students take their handwritten scratchwork and type it up, they go through another round of thinking about their arguments (in my experience, more thorough than when they just recopy by hand) and end up with better reasoned (and more likely to be correct) work.

They will all eventually need to be able to use LaTeX fluently, and it's better to start learning sooner rather than later.

Your system partially defeats the second and third reasons. Only partially, since you're still typing up the text part yourself, plus reading and tweaking the AI's LaTeX output. So I get, and don't fully disagree with, your choice to do things this way at this stage, when you are pressed for time and focusing on learning the math. But although as I said I wouldn't consider what you're doing to be cheating, I see it as not really in the spirit of the requirement.

- 3,014

- 19

- 21

-

2

- is an excellent reason. 3. is also great. Moreover, I always found that every tool to speed up things LaTeX-wise ends up wasting even more of my time. Frontends for bibliography cease to work at critical moments, or are incompatible with my collaborators; auto-completions end up messing up with the code, etc... In the end, it is better to just bite the bullet and type LaTeX directly. (I never used these AI tools, but I suspect they are still at this stage where they are more of a waste of time)

– Giuseppe Negro Feb 09 '24 at 17:01 -

Regarding #3: I’d say it’s pretty unlikely that the average undergrad in the average math class will end up in a job where LaTeX fluency is required. – bubba Feb 13 '24 at 06:57

-

@bubba Agreed. Which is part of why I don't have that same requirement in my undergrad classes. For the PhD students, they'll at least need LaTeX fluency for writing their theses, regardless of their post-degree employment. – Mark Meckes Feb 13 '24 at 16:54

In my opinion there should be no issue in using such methods for mechanistic conversions (as opposed to generation of text, solutions, ideas, etc.), assuming you check the output for accuracy, of course. In spirit, it is the same as using OCR. Further, I am not aware of anyone having objections to use of Detexify, which also uses a machine learning method. I see no clear reason for why it would be OK to use machine learning methods to recognize one symbol at a time, but not entire equations or sections... That said, Mathpix's marketing leans heavily into the AI angle so it may be a likelier target of overly broad policies. Finally, the data security concern raised in this answer is worth taking into account.

As some answers have mentioned, courses and assignments where the goal is to learn using (La)TeX can be different in this regard from cases where (La)TeX is just used to typeset documents, and it's worth asking the instructor about both rules and advice. Jumping straight to automation tools like this may mean you overlook learning useful structures and how to effectively read TeX. However, at the same time, personally I tend to think that such courses should introduce useful tools like this (and, e.g., various editors, online table generators, and the TeX StackExchange!) that help overcoming the (often exaggerated) entry barriers to learning (La)TeX. Having to constantly flip through tables of symbols until you learn a sufficient number of commands for math symbols was frustrating, but hasn't been necessary for a while now, and courses ought to reflect this reality.

- 26,132

- 8

- 87

- 116

-

1Heck, using Word’s spellchecker is using a fairly primitive LLM and I doubt, aside from elementary school spelling class, that anyone cares. And one does need to check the suggestions at times, particularly for technical terms. – Jon Custer Feb 09 '24 at 14:11

-

1If you entered a spelling competition it would be cheating. If there was a competition for “most beautiful latex output of complex formulas” it would be cheating. Since what you do is producing maths it’s not. – gnasher729 Feb 11 '24 at 08:40

I saw handwriting input for equations years ago. It was used with Word, but used a TeX-like format at least as an intermediate step. That required using writing on a graphics tablet rather than scanning, but fundamentally it's the same concept (and akin to a cleverer version of automatically generating bounding boxes round characters and feeding them to detexify).

So this is an improved input tool. It's not plagiarism.

That doesn't mean it's not cheating in a LaTeX course, where the goal is to learn to write your own code. But there are also click-and-place equation generators for TeX and I'd see those in the same light.

- 8,576

- 22

- 36

I think you should talk to the prof, as it's possible that learning LaTex is one of their educational objectives.

That said, IMO, if you use a tool to turn your handwritten notes into LaTex, and then prominently cite the tool and say in the submissions how LaTex was generated, then this is NOT an academic honesty problem (with the caveat that there may be language in the syllabus saying that it IS an academic honesty problem -- maybe "all turned in work must be your own" or some such -- in which case, it assuredly is). This way, you haven't claimed, in any way, personal provenance over work that isn't yours. You may certainly lose points for not doing it "right", but that doesn't make it an honesty issue.

- 31,120

- 4

- 52

- 121

What is the assignement about?

Is the evaluation based on the quality of LaTex code?

If yes, and learning LaTex is part of the course and therefore an essential part of the hand-in? -> don't use it!

If not, and it is about the content, and the format is simply required to be LaTex? -> No problem!

Why this is not a problem

(stated well in other answers)

Assumption: as stated, the tool does not do anything generative. Even if other sources were used to train it, so have other sources been used to train humans -> the tool should cite sources used, but this doesn't mean that there is content in there.

Plagiarism applies to ideas and content. You could cite the tools you use (in academia, that would surely be the right way), such as LaTex itself, maybe a compiler etc. But if you use handwritten text as a base, Word and a converter, whether you use a keyboard or voice promts to write: it's irrelevant.

- 3,289

- 1

- 13

- 19

plagiarism - no

other academic misconduct - no, unless one of the purposes of the course or the assignment is for you to learn LaTeX, in which case, you would be cheating.

If not certain, you can always ask your instructor.

- 1